HSTS, or HTTP Strict Transport Security is essentially a means of ensuring that your connection is secure. It is a feature of modern browsers that is designed to prevent, for example, man-in-the-middle attacks, where you request a secure resource, such as https://mybank.com, and are redirected by a malicious 3rd party over a non-secure connection, to http://mybank.com. Note the missing “s” in the 2nd URL Scheme.

How HSTS Works

Browsers typically solve this problem by storing security preferences in a small data structure. In its simplest form, this is a key-value pair index, where the key is the resource URL and the value is a boolean variable indicating whether or not the connection to the associated resource should be established in a secure manner:

_____________________ google.com | 1 | bing.com | 1 | apple.com | 1 | ____________________

Note that the above example indicates that all requests to google.com, bing.com, and apple.com should be made in a secure manner, over HTTPS. We can infer then, that entries that do not exist in the HSTS database can be said to allow non-secure connections. If we were to view this in tabular-format, it would resemble the following, where entries for both http://yahoo.com and http://wordpress.com do not exist in the browsers HSTS database:

_____________________ google.com | 1 | yahoo.com | 0 | bing.com | 1 | wordpress.com | 0 | apple.com | 1 | ____________________

When read as a single value, the complete sequence of boolean values for this table reads as “10101”. It is therefore possible to leverage this table to store arbitrary binary values.

However, it is the sights themselves that determine whether or not they should be accessed over a secure connection or not. This is achieved by returning a HTTP 301 response to requests established over non-secure channels. The HTTP response also includes a reference to the secure URL. The browser will honour this response by redirecting to the secure URL, which returns the following HTTP Header:

Strict-Transport-Security: max-age=31536000

Note that the above max-age parameter may be set as required; the above is simply an example.

The browser, upon receiving this response, will add an entry to its HSTS database, indicating that all future requests should be established over a secure channel.

How to Hack HSTS

In order to “save” a binary value to the HSTS database, we need to control the URL entries that will reside within the database. Let’s assume that I own the following 4 domains:

1.supercookies.com 2.supercookies.com 3.supercookies.com 4.supercookies.com

I configure each of these sites to indicate the connections should only be established over secure channels. Imagine then, that I create a website that contains a JavaScript file that creates a random 4-digit binary value – in this case, “1010″.

In order to “save” this value, my JavaScript file should contain a function that connects to both 1.supercookies.com and 3.supercookies.com. This will create the following entries in the HSTS database:

_____________________________ 1.supercookies.com | 1 | 3.supercookies.com | 1 | _____________________________

We can infer from this that taking into account both other domains, out view of each domain expressed in tabular format might represent the following:

_____________________________ 1.supercookies.com | 1 | 2.supercookies.com | 0 | 3.supercookies.com | 1 | 4.supercookies.com | 0 | _____________________________

In other words, by implementing a custom endpoint in each domain that simply returns a boolean value indicating whether or not the inbound HTTP request is secure or not will indicate to us whether or not there is an entry in the browsers HSTS database for that domain. For example, if we invoke a connection to http://1.supercookies.com (note the non-secure HTTP Scheme) then we would expect the browser to force a redirect to the secure equivalent of that URL (https://1.supercookies.com). Thus, if out endpoint returns a positive boolean, we can infer that this domain is present in our browsers HSTS database. Otherwise, the domain is not present, and our endpoint will return a negative boolean. By establishing connections to each domain, we can build a series of boolean values; in this case, “1010“.

Practical Example with ASP.NET Web API

Add the following ASP.NET Web API Controller method to write an entry to the HSTS database for the domain that hosts your ASP.NET application:

public HttpResponseMessage Write()

{

HttpResponseMessage response;

if (Request.RequestUri.Scheme.Equals("https"))

{

response = Request.CreateResponse(HttpStatusCode.NoContent);

response.Headers.Add("Strict-Transport-Security", "max-age=3153600");

return response;

}

response = Request.CreateResponse(HttpStatusCode.MovedPermanently);

response.Headers.Location = new Uri(Request.RequestUri.AbsoluteUri.Replace("http", "https"));

return response;

}

In simple cases, the above method simply returns a HTTP 301, that indicates to the browser to redirect to the secure equivalent of the origin URL. Upon redirecting, the browser receives the HSTS Header that results in an entry in the HSTS database for the domain that hosts your ASP.NET application.

Add the following method in order to read the HSTS entry (if present) for the domain that hosts your ASP.NET application:

public class HSTSResponse

{

public bool IsSet { get; set; }

}

public HSTSResponse Read()

{

if (Request.RequestUri.Scheme.Equals("https"))

{

return new HSTSResponse

{

IsSet = true

};

}

return new HSTSResponse();

}

This method returns a positive boolean value if the inbound HTTP request is secure, implying that the upstream browser contains an entry in its HSTS database for the domain that hosts your ASP.NET application.

Generating Tracking IDs

It is not necessary to compile or run the source code – simply browse to the included index.html file in order to demonstrate the process. You can, of course, run the application locally if you wish.

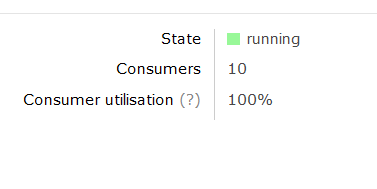

The complete code leverages 4 external websites, as per the above example, in order to generate a binary value and indirectly store it in the HSTS database. Leveraging 4 external websites yields a total of 24 possible unique values – hardly enough to constitute a unique tracking mechanism. However, consider that if we own 32 external domains we can now control over 2.6 billion unique tracking IDs using this method. Note that the tracking ID in the sample code is rendered as Base-36 for legibility.

Why use the HSTS database as a storage mechanism

Cookies can be removed, edited, and faked. Leveraging the HSTS database as a storage mechanism potentially reduces the possibility that your tracking ID will be deleted. While this style of design is generally considered unscrupulous, the purpose of this post is to educate; whether or not this mechanism should be implemented in the wild is a matter of opinion that I leave up to the reader.

Connect with me: